Introduction

Students of the health care professions generally undertake a number of clinical placements during their training. Whilst they are in practice a clinical practitioner will assess the student’s competence against a set of learning outcomes and give ongoing feedback to the student. Due to the workload of the supervising practitioner, the assessment processes can be fragile, which in turn can impinge on the students’ learning. At the same time students in practice are away from their usual learning environment, and it can be difficult for them to access their learning resources at the time that they discover the need. The principle, upon which this project is based, is that practice based learning and in particular the mentoring process, would be improved if the student and mentor had access to tools which allowed on the spot on-line entry of results of assessments, such that feedback would be immediate, and thus followup actions could be decided instantly.This project aims to provide a mobile learning toolkit to support practice based learning, mentoring and assessment. This toolkit will provide an interface so that course leader can specify, in a flexible manner, the learning outcomes to be met, the method of assessment (including the form of the result, how it will be recorded, and by whom), the timing of the assessment(s) and the feedback to be given in response to the results, suitable learning resources to support these learning outcomes, and the actions to be taken when assessments are not completed in a timely manner. Such a toolkit could be used on a variety of programmes, both HE and FE, and in clinical and non-clinical contexts, where work place assessment is an integral part of the course.

The toolkit will enable deployment of the mentor’s assessment interface on a number of platforms, ranging from PC’s through to PDAs and Smartphones and will also provide tools such as RSS feeds to simplify distribution of the learning resources. The project will contribute to the JISC community by adding mobile assessment tools to the E-framework.

The consortium is a well-connected group. The Computer Scientists who will provide the toolkit have previously worked together within the JISC Reference Model projects. Within both TVU and Southampton the Computer Scientists have previously worked with their Nursing and Healthcare departments. Southampton and TVU both have recently been placed in the same NHS area, and the Bournemouth and Poole College is an established FE partner for Southampton University. The following scenario, taken from Nursing, illustrates the problems and the need for such a toolkit.

Nursing Scenario

Pre-registration nursing students spend 50% of their 3-year programme in clinical practice undertaking a series of placements in different areas of the healthcare system. Whilst in practice, students are supported by mentors for the duration of their placement. Mentors are also required to assess the students’ competence in practice against a set of learning outcomes detailed in the practice assessment booklet or practice portfolio. These are summative assessments which students are required to pass in order to register as a nurse at the end of their programme. Students are expected to complete a preliminary, an interim and a final interview with their mentor. The interim interview is crucial as it is at this point that the student who is failing to progress is likely to be identified and action plans can be put into place. This good practice feature of induction, interim, and final assessment is common to most educational situations where students experience work-based learning situations.The HEI has a responsibility to assure the quality of the assessment process in practice placements as well as within the HEI itself. In order to achieve this, the HEI has a duty to ensure that mentors are wellinformed about the assessment process and the curriculum the students are following. This is however, a major challenge due to the large numbers of mentors (several thousand for any HEI), and the fact that mentors have difficulty in being released from practice to attend updates on the assessment process and curriculum due to pressures in the workplace.

Issues around ensuring that students are fit for practice at the point of registration were brought home recently following a report by Duffy (2004)1 which found that mentors were failing to fail students due to a number of factors, such as lack of confidence, concerns over personal consequences (for student and self), and leaving it too late to implement formal procedures (the preliminary and interim interviews missed or undertaken too late such that action plans to assist a student who is not progressing satisfactorily are not put in place). There is also the challenge of supporting a large number of mentors across a wide geographical area. Whilst academic staff from the HEI are expected to provide support to mentors, the ability to do so at the precise time mentors require it can be problematic.

What is needed?

Assessment-targeted resources using mobile technologies that offer mentors and students assistance and support in the assessment process at the time it is needed. These resources could offer:- Prompts for following the assessment process re preliminary, interim and summative interviews

- Guidance on interpretation of the competencies for mentors and students

- Access to resources to aid the assessment process

- Links to resources targeted to support students’ learning and assessment outcomes

- Electronic recording of the assessment process to enable:

- Checking of inter-assessor reliability

- Offer feedback mechanisms to mentors on assessment process

- Ongoing records of achievement

1.2. The Proposed Toolkit

Other projects that have looked at supporting learning through mobile devices have built large systems that integrate information in a fixed and pre-designed way. For example, the Nightingale Tracker2 uses a central database and provides data and communication tools built around it, and the Chawton House project uses a sophisticated orchestration engine to drive an outdoors learning experience based on proprietary activity cards3. However, the whole-system approach is inherently heavyweight and inflexible, and has led to criticisms that mobile learning is technology-led and pedagogically naive4. Standalone tools such as the "ME" mobile workbook5, or the cell-phone English vocabulary trainer6 offer a simpler alternative that more flexibly fit with existing practise, but these lack the integration qualities of the wholesystem approach.In this project we are proposing to try and take the best from both approaches by entering into a codesign process with practitioners that will result in a toolkit for mobile learning tailored to their existing practice. Without wanting to pre-empt the co-design process, we might see simple tools for tasks such as returning feedback to students, leaving messages for mentors, or monitoring students’ progress through their study whilst in the clinical environment.

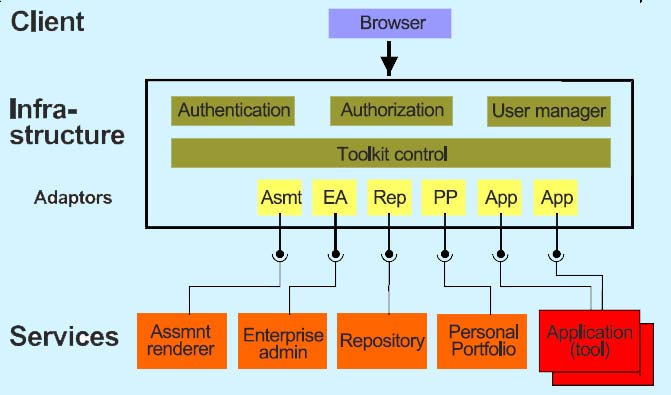

The toolkit with be flexible in that the tools will not rely on one another to be effective, however building the toolkit on top of the existing e-Framework will allow some of the advantages of the whole-systems approach to apply. For example, if there is a central assessment system then the feedback tool mentioned above would access it through the framework, and the feedback events would be reflected in the progress tool. Use of the e-Framework will also allow the potential use of alternative tools for specific needs.

This project is intended to be a practical mechanism for engaging technologists with practitioners with real results that can be deployed in real teaching environments, and as such it will concentrate on simple tools that perform small but valuable tasks. Later work will then be able to build upon the mobile toolkit and the e-Framework to deliver more novel applications and begin an informed innovation of mobile pedagogy within the nursing domain.

Given the increasingly complex, changing and technical health care working environments, it is difficult to trial product design, development and evaluation within these environments without detriment to users of the health services. The ability to test the toolkit in a simulated environment that can reliably ‘approximate’ for reality in a controlled manner is highly attractive. The Clinical Skills Laboratory associated with the Virtual Interactive Practice developments in the University of Southampton, School of Nursing and Midwifery is intended to form the test bed for product development prior to application with users in ‘real’ clinical practice settings7,8. This will enable us to test the toolkit utilising students and practitioners within a simulated environment that ‘mimics’ the reality of clinical pressure, movement and context. This ability to enable use of the toolkit in a safe, controlled environment will facilitate experimentation, design and application of the tool for user acceptance, friendliness, practicality, reliability, validity and educational merit.

Clinical environments are generally very busy complex environments. The key focus for mentors has to be the safety and wellbeing of their patients/clients. The time a mentor is able to engage in teaching students is variable but tends to be limited. Students from a number of HEIs and different educational programmes may be placed in the same clinical environment at the same time. Each has individual learning outcomes and competencies that they have to achieve during the course of the placement. These variables are further complicated by the need to complete, often in-depth, assessment records of achievement for the individual learners. At the least this can be confusing for the mentor. An electronic toolkit that includes a repository of updated support and guidance materials would enable mentors to work with students to negotiate appropriate objectives thus ensuring the optimum learning for the student. Although hard copies of these materials are available in the practice areas they tend to be large, heavy and bulky. Paper copies of the materials become easily lost or incomplete and may be taken away from the designated areas. The toolkit would allow all mentors and students to have immediate access to these resources both within and outside the practice area with the assurance of completeness and currency of the materials.

Although the responsibility of providing the assessment of practice portfolio documentation for the mentor to complete lies with the individual student, this can present some difficulties because of the physical size of the portfolio. Some students try to resolve this by splitting off sections of the portfolio that are relevant to that placement but this means that the mentor cannot perform the assessment in the context of the whole student achievement and experience to date. This can make it difficult to see an overall pattern of progression. Having the documentation available electronically would resolve these problems.

2. Project description

The project envisages the development of three technical components:- A 'back office' infrastructure or framework to support the deployment of the proposed toolkit.

- A selected tool of the toolkit, such as monitoring students' progress through their study.

- Another selected tool of the toolkit, such as returning feedback to students.

Each component (the infrastructure and two tools) is developed following the standard systems engineering approach of systems analysis and requirements specification, initial and detailed design, implementation (coding and unit testing), piloting (alpha and beta testing, review, and enhancement), and deployment. In general there will be a strong alignment to the Rational Unified Process.

The three components will be developed in two overlapping stages. The first stage provides for the development of the back office support and the development of one tool, and the second stage provides for the development of a second tool. The first stage leads the second by 6 months, to allow the specification of the second tool to benefit from a period of experience and reflection upon progress to date with the back office and first tool development.

Figure 1. System architecture

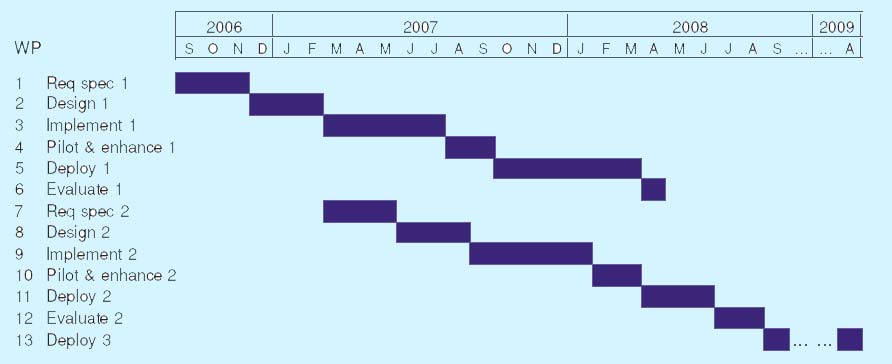

The technical components will be deployed in three phases. Phase 1 comprises product design, pilot, and evaluation within a simulated environment. Phases 2 and 3 involve the application and implementation of the toolkit within real clinical contexts. Students and staff will be involved in all Phases; however in Phases 2 and 3, the interactions within the clinical context, staff and health service users will require careful control and monitoring. Phase 3 occurs in the third year of the project, funded entirely by the partner institutions, following the first two years which are partially JISC-funded. Phase 1 deployment will take place at the School of Nursing and Midwifery (NMW), while Phase 2 and 3 deployment will take place within clinical contexts associated with the University of Southampton Foundation Degree in Health and Social Care located within the Health Care Innovation Unit (HCIU), Thames Valley University (TVU), and Bournemouth and Poole College (BPC). Throughout the project, monthly management meetings will take place with NMW, HCIU, TVU, and BPC personnel under the project management of the Learning Technologies Group (LTG). The project comprises 13 workpackages, illustrated in the Gantt chart of Figure 2, and described in more detail below.

2.1. Workpackages

The workpackages are listed below in approximate chronological (scheduled) order, starting in September 2006. Their ordered work breakdown structure is as illustrated in Figure 2.Workpackage 1: Requirements specification 1. WP 1 involves the preparation and delivery of the systems analysis and requirements specification for the back office infrastructure or framework to support the proposed toolkit, and for the first tool of the toolkit. WP 1 also involves specification of procedures for ethics and governance for all sites. The deliverables will be led by LTG and prepared in collaboration with NMW, HCIU, and TVU, scheduled for 3 months.

Workpackage 2: Design 1. WP 2 involves the preparation and delivery of the initial and detailed designs for the back office infrastructure and the first tool. The design deliverables will be led by LTG and prepared in collaboration with TVU, scheduled for 3 months. WP2 involves the design of simulation scenarios within which the toolkit can be piloted, and the design of evaluation and analytical tools with respect to user satisfaction, educational utility, effectiveness and practicality.

Workpackage 3: Implement 1. WP 3 involves programming, unit testing, and alpha testing of the back office infrastructure and the first tool. The software will be led by LTG in collaboration with TVU, scheduled for 5 months. WP3 also involves the selection and recruitment, led by NMW in collaboration with HCIU, TVU, and BPC, of clinical sites for Phase 2 and 3 deployment.

Workpackage 7: Requirements specification 2. WP 7 involves the preparation and delivery of the systems analysis and requirements specification for the second tool of the toolkit. The deliverable will be led by LTG in collaboration with NMW, HCIU, and TVU, scheduled for 3 months.

Figure 2. Project outline workpackages schedule

Workpackage 4: Pilot and enhance 1. WP 4 involves beta testing, evaluation, and enhancement of the back office infrastructure and the first tool. Testing and evaluation will be led by NMW in collaboration with LTG, HCIU, and TVU, while software enhancement will be led by LTG in collaboration with TVU, the whole workpackage being scheduled for 2 months. WP 4 also involves the selection and recruitment of students, educational, and practice staff to form a reference group for deployment.

Workpackage 9: Implement 2. WP 9 involves programming, unit testing, and alpha testing of the second tool. The software will be led by LTG in collaboration with TVU, scheduled for 5 months.

Workpackage 5: Deploy 1. WP 5 deploys the back office infrastructure and the first tool in clinical contexts associated with NMW, HCIU, TVU, and BPC. Deployment will be undertaken by MNW, HCIU, TVU and BPC, supported and project managed by LTG, and is scheduled for 6 months to coincide with the first and the early part of the second academic semesters of the partner Universities and College.

Workpackage 10: Pilot and enhance 2. WP 10 involves beta testing, evaluation, and enhancement of the second tool. Testing and evaluation will be led by NMW in collaboration with LTG, HCIU, and TVU, while software enhancement will be led by LTG in collaboration with TVU, the whole workpackage being scheduled for 2 months.

Workpackage 6: Evaluate 1. WP 6 involves the preparation and delivery of an interim toolkit evaluation dealing with the back office infrastructure and the first tool of the toolkit. Evaluation will be led by NMW in collaboration with HCIU, TVU, and BPC, supported and project managed by LTG, and is scheduled for 1 month.

Workpackage 12: Deploy 2. WP 12 deploys the second tool in clinical contexts associated with NMW, HCIU, TVU, and BPC. Deployment will be undertaken by NMW, HCIU, TVU and BPC, supported and project managed by LTG, and is scheduled for 3 months to coincide with the second academic semester of the partner Universities and College.

Workpackage 11: Evaluate 2. WP 11 involves the preparation and delivery of an overall project evaluation and final report dealing with the JISC-funded Phases 1 and 2 of deployment. Evaluation will be led by NMW in collaboration with HCIU, TVU and BPC, supported and project managed by LTG, and is scheduled for 2 months.

Workpackage 13: Deploy 3. WP 13 deploys Phase 3 of the project, the back office infrastructure and both tools, in clinical contexts associated with NMW, HCIU, and TVU, and in clinical and non-clinical contexts associated with BPC. Deployment will be undertaken by NMW, HCIU, TVU and BPC, supported and project managed by LTG, and is scheduled for 12 months to coincide with the complete academic year of the partner Universities and College. Within BPC, additional non-clinical groups of students undertaking work-based assessment on a range of courses (full-time, part-time, 16-19, and 19+) would be selected and a contrast made to control groups where the toolkit was not in use.

2.2. Standards and QA

The software developed in this project will be Java based. The project will take a Service Oriented Architecture (SOA) approach. The services developed will be written in JAVA. Coding standards will be adopted to ensure readability, testability and installability. Code will be unit tested using Junit. The project will build upon existing specifications and standards from JISC, IMS, and other projects. In particular, it is expected to reference agreed standards such as SOAP and WSDL of the W3C; the Question and Test Interoperability (QTI) version 2 of IMS and the Remote Question Protocol (RQP) to provide remote processing of assessment items on behalf of assessment systems9. Compliance with SOAP will be assured using an appropriate testing package (e.g. SOAPscope). Full account will be taken of issues relating to accessibility of Web-based systems and software and the outputs of this project will conform to published standards and guidelines.Members of the team are already helping define and clarify how the e-Framework will work. This project will provide further substantive use of the JISC standards emanating from the e-Framework. In particular, the project will contribute exemplars of documentation regarding service specification, service genres and other artefacts.

2.3. Quality Management

Quality management in this project will be addressed by focusing on three aspects: Quality assurance; Quality Planning and Quality Control.JISC has developed guidelines on quality assurance which cover areas such as the establishment of frameworks (procedures and standards), project management guidelines and policies on open source software development. This project will use these guidelines and enhance them as appropriate. The Quality Planning on this project will develop a quality plan at the start of the project. This plan will describe standards for document production (e.g. requirements specifications, the use of a project glossary; non-functional requirements). In addition, the plan will describe standards for process management such as:

- Version and configuration management for software development activities;

- Change management control;

- Requirements tracking;

- Guidance on use of specific tools (e.g. IBM Rational Rose XDE, IBM Requisite Pro, MS Project);

2.4. IPR, sustainability

While the code will be published in Source Forge and made available under an appropriate open source agreement and may be used within any educational establishment in line with JISC’s requirements, as per the terms and conditions of JISC grants, the University and its partners will retain shared IPR on the learning content, the software artefacts, and associated documentation. This will be confirmed via a Consortium agreement for defining IPR arrangements that conforms to JISC requirements.Sustainability of the code produced is through ensuring other institutions and JISC/CETIS projects have access to the code and documentation for the system, through LGPLM or GPL licences. Quality factors built in to the workpackages will ensure successful Open Source life through achievement of a good OSMM rating and community stated need.

All reports, tools and code from the project will remain on the project server for a minimum period of 3 years and archived in the institutional repository (E-Prints) and appropriate JISC repository. 9 RQP was developed as mark of the serving maths project http://mantis.york.ac.uk/moodle/